Vision-Language-Action (VLA) models combine perception, language, and motor control in a single architecture, yet how they translate multimodal inputs into actions remains poorly understood. We apply activation injection, sparse autoencoders (SAEs), and linear probes to six models spanning 80M-7B parameters across 424,000+ rollout episodes on four benchmarks. The visual pathway dominates action generation across all architectures: injecting baseline activations into null-prompt episodes recovers near-identical behavior, while cross-task injection steers robots toward source-task positions (99.8% of X-VLA episodes align with the source trajectory), exposing spatially bound motor programs tied to scene coordinates. Language sensitivity depends on task structure, not model design: when visual context uniquely specifies the task, language is ignored; when multiple goals share a scene, language becomes essential (X-VLA libero_goal: 94%→10% under wrong prompts vs. libero_object: 60-100% regardless). In all three multi-pathway architectures (Pi0.5, SmolVLA, GR00T), expert pathways encode motor programs while VLM pathways encode goal semantics (2× greater behavioral displacement from expert injection), and subspace injection confirms these occupy separable activation subspaces. Per-token SAE processing is essential for action fidelity on most architectures, though mean-pooling improves fidelity on X-VLA. Contrastive identification recovers 82+ manipulation concepts; causal ablation reveals sensitivity spanning 28-92% zero-effect rates independent of representation width. We release Action Atlas for interactive exploration of VLA representations across all six models.

Per-token Sparse Autoencoders + causal interventions for VLA mechanistic interpretability

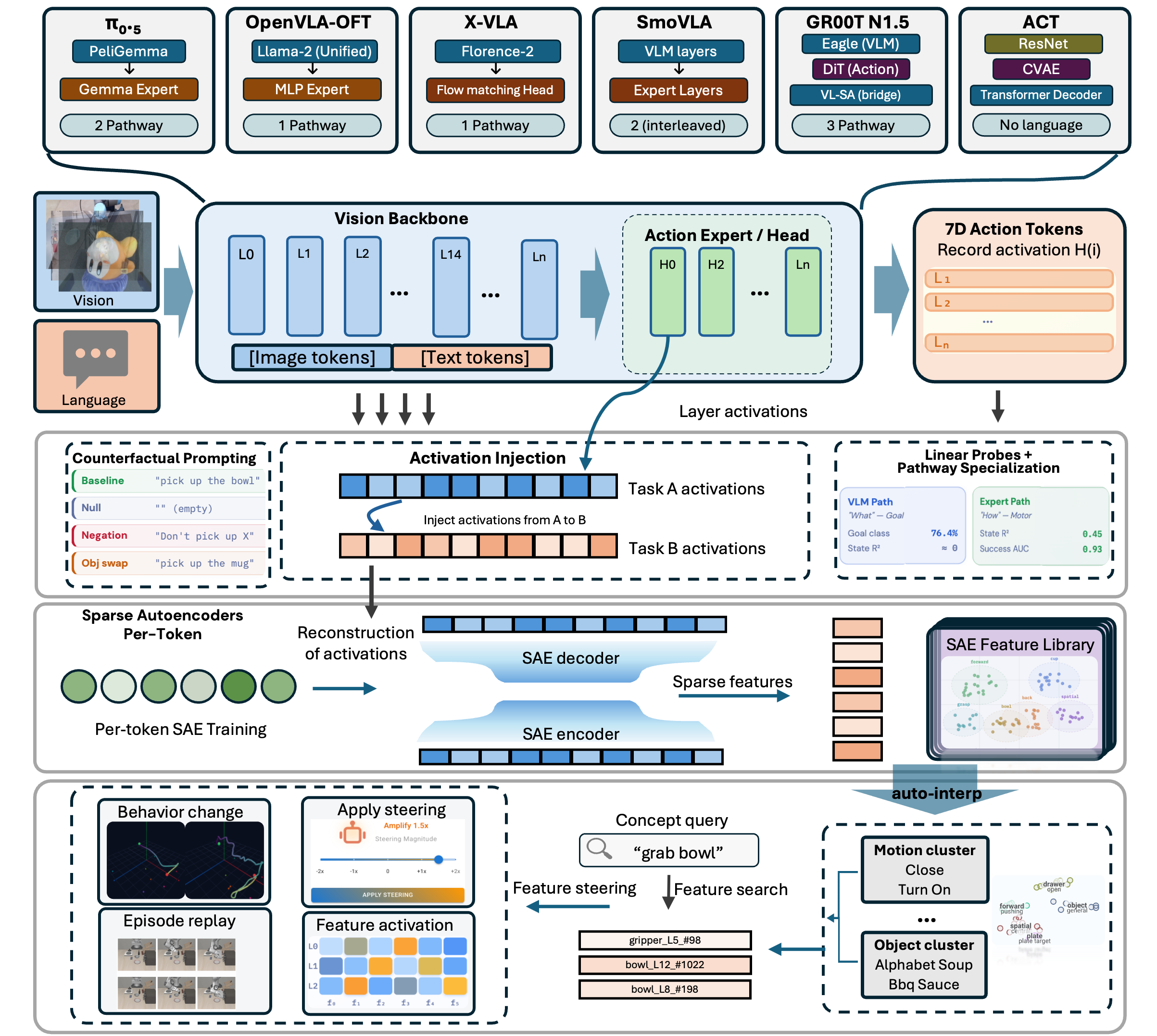

Methodology overview. Top: activations are recorded from VLA backbone and action expert layers during rollout episodes, then replayed under counterfactual conditions (null prompts, cross-task scenes) to establish causal relationships via behavioral change. Middle: per-token SAEs decompose layer activations into sparse features. Bottom: features are clustered, searched, and causally validated through ablation and steering experiments, with results visualized in Action Atlas.

We train 424+ TopK SAEs (k=64) across all six models with 4-8x expansion. Each action token is processed independently to preserve temporal structure. Feature importance is scored via frequency-weighted contrastive selection using Cohen's d, recovering 82+ manipulation concepts.

We test causality with four injection conditions: null injection (correct prompt to empty string), same-scene steering (redirect to alternate targets), cross-task injection (transfer across visual scenes), and cross-seed (same task, different initial conditions).

Concept ablation zeros out specific SAE features during live rollouts, while feature steering scales feature activations by alpha to amplify or suppress encoded behaviors.

Ridge regression probes achieve 97-98% R² across all action dimensions. A projection operator test confirms causality: projecting out the probe direction drops R² to 0%.

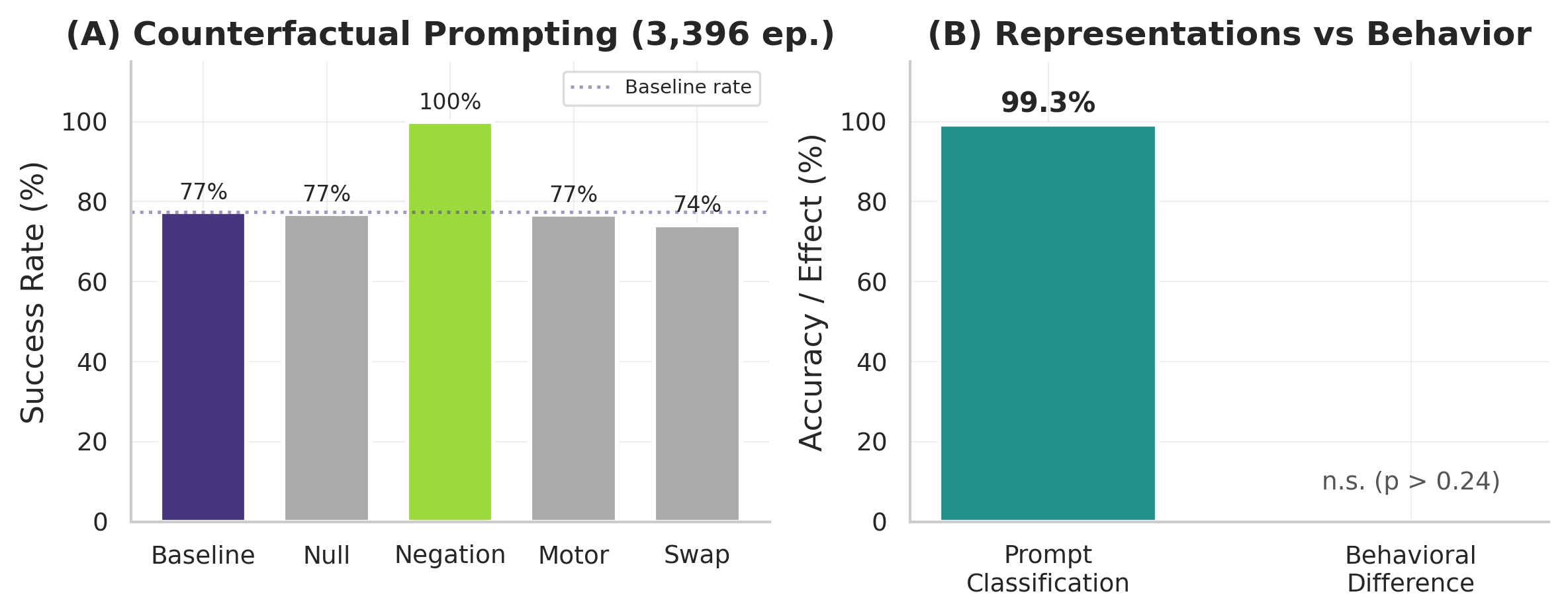

We test language grounding with 6 prompt variations across Pi0.5 (3,396+ episodes, ANOVA p = 0.25), SmolVLA (MetaWorld, 4 difficulty levels), and X-VLA (LIBERO + SimplerEnv): baseline, null (empty string), negation, motor commands, object swap, and temporal switches.

Four findings that hold across five VLA architectures

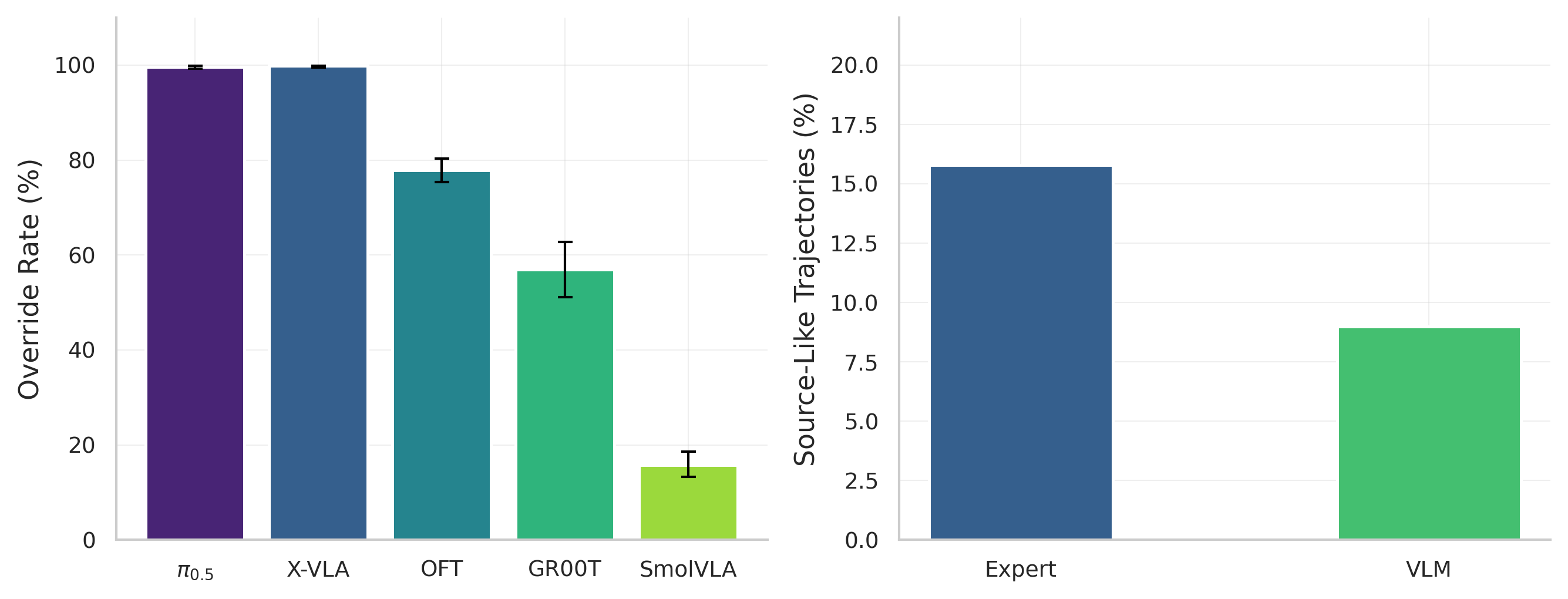

Injecting baseline visual activations into null-prompt episodes recovers near-identical behavior (cos = 0.999). Cross-task injection steers robots toward source-task positions: 99.8% of X-VLA episodes align with the source trajectory, exposing spatially bound motor programs tied to scene coordinates.

When visual context uniquely specifies the task, language is ignored. When multiple goals share a scene, language becomes essential: X-VLA libero_goal drops from 94% to 10% under wrong prompts, while libero_object maintains 60-100% regardless of prompt.

In all three multi-pathway architectures (Pi0.5, SmolVLA, GR00T), expert pathways cause 2× greater behavioral displacement than VLM pathways. Subspace injection confirms these occupy separable activation subspaces: expert for HOW, VLM for WHAT.

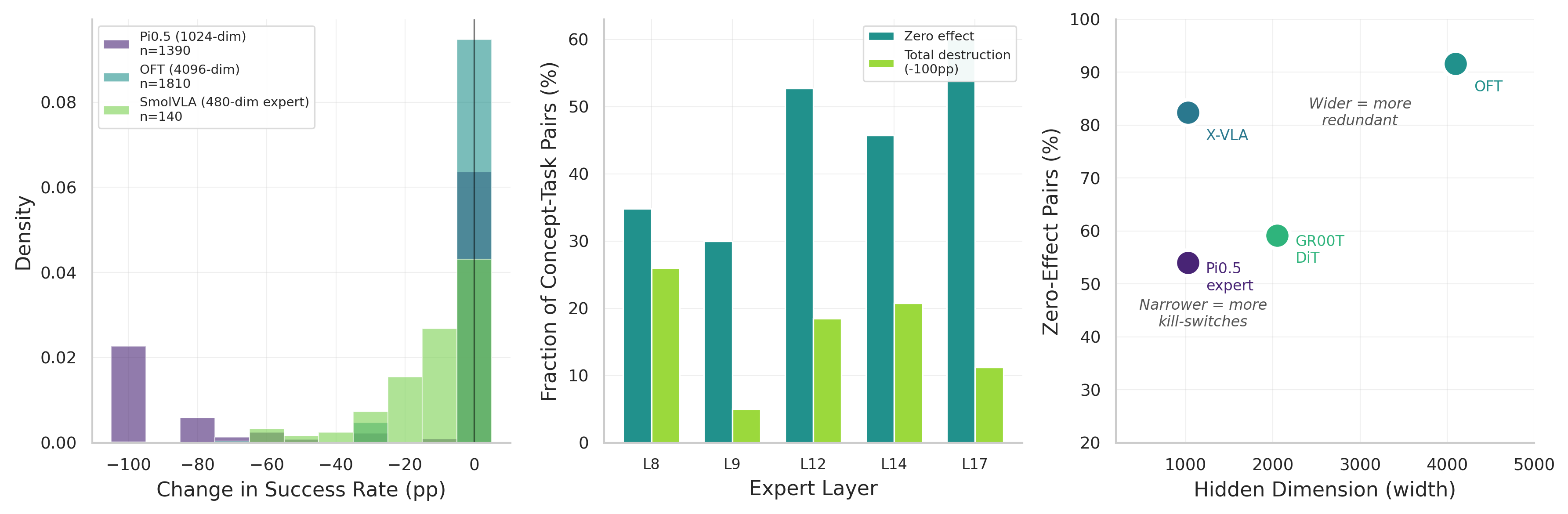

Per-token processing is essential for action fidelity on most architectures, though mean-pooling improves fidelity on X-VLA. Contrastive identification recovers 82+ manipulation concepts; causal ablation reveals sensitivity spanning 28-92% zero-effect rates independent of representation width.

Cross-task displacement override rates. Left: override rate across five models. Pi0.5 (99.6%) and X-VLA (99.8%) show near-complete source behavior transfer; OFT 77.9%; GR00T 57.0% (suite-dependent). Right: SmolVLA pathway displacement (15.8% expert vs. 9.0% VLM).

Language is ignored despite internal distinction. Left: counterfactual prompting across 3,396+ episodes shows no significant behavioral difference (p>0.24). Right: layer 17 classifiers distinguish prompts with 99.3% accuracy, yet behavior is unchanged.

Concept ablation causal sensitivity across five models. SmolVLA (480-dim expert) is the most sensitive at 28% zero-effect rate; OFT (4096-dim) and X-VLA (1024-dim) are the most resilient at 92% and 82%. Causal sensitivity does not follow representation width.

| Model | Hidden Dim | Zero-Effect Rate | Destruction Rate | Profile |

|---|---|---|---|---|

| SmolVLA Expert | 480 | 28% | 6.3% | Narrow, most sensitive |

| Pi0.5 Expert | 1024 | 54% | 14% | Bimodal, kill-switches at L8 |

| GR00T N1.5 | 1536-2048 | 59% | 9.1% | Pathway-dependent (DiT > Eagle) |

| X-VLA | 1024 | 82% | 2.7% | Resilient despite narrow width |

| OpenVLA-OFT | 4096 | 92% | 0.5% | Wide, most resilient |

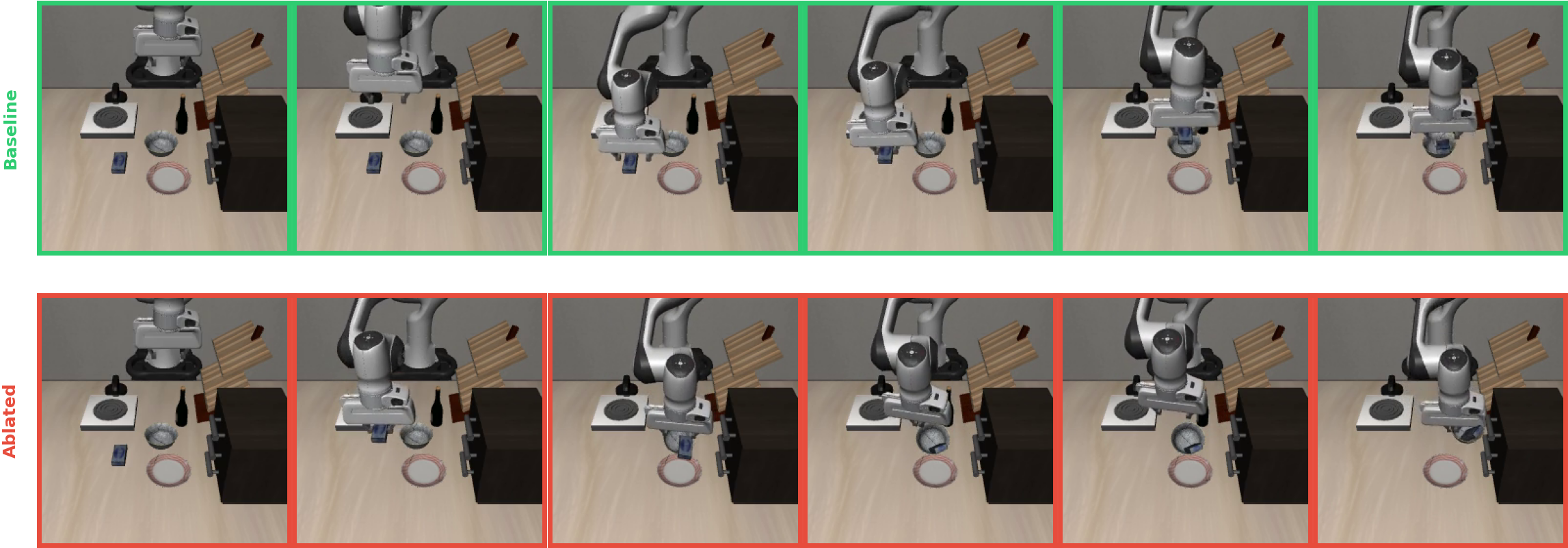

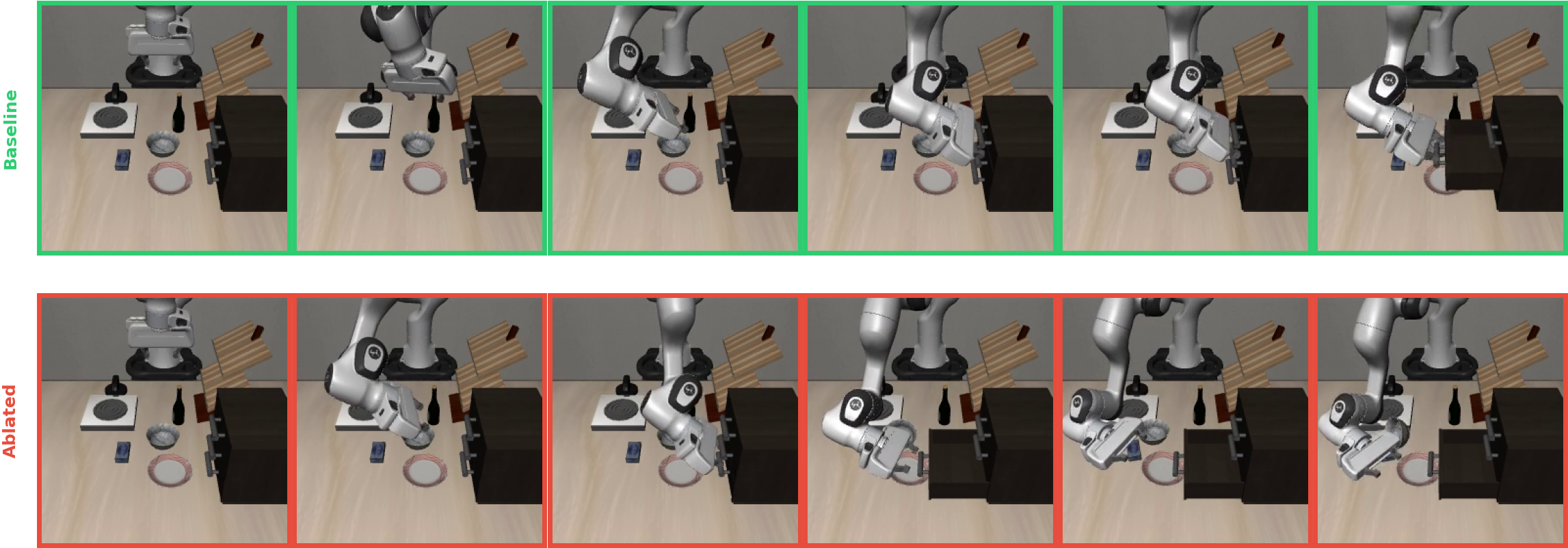

PUT concept ablation (L8): “Put the cream cheese in the bowl.” Top: baseline picks up cream cheese and places it in the bowl (91 steps). Bottom: with PUT features zeroed, the robot drops the cream cheese into the bowl, knocking it over (300 steps, task failure).

OPEN concept ablation (L8): “Open the middle drawer of the cabinet.” Top: baseline reaches for the middle drawer, grasps and pulls it open (140 steps). Bottom: with OPEN features zeroed, the robot opens the bottom drawer instead of the middle, misdirecting the motor program to the wrong target (300 steps, task failure).

Five VLAs and one language-free control, 80M to 7B parameters, three action generation paradigms

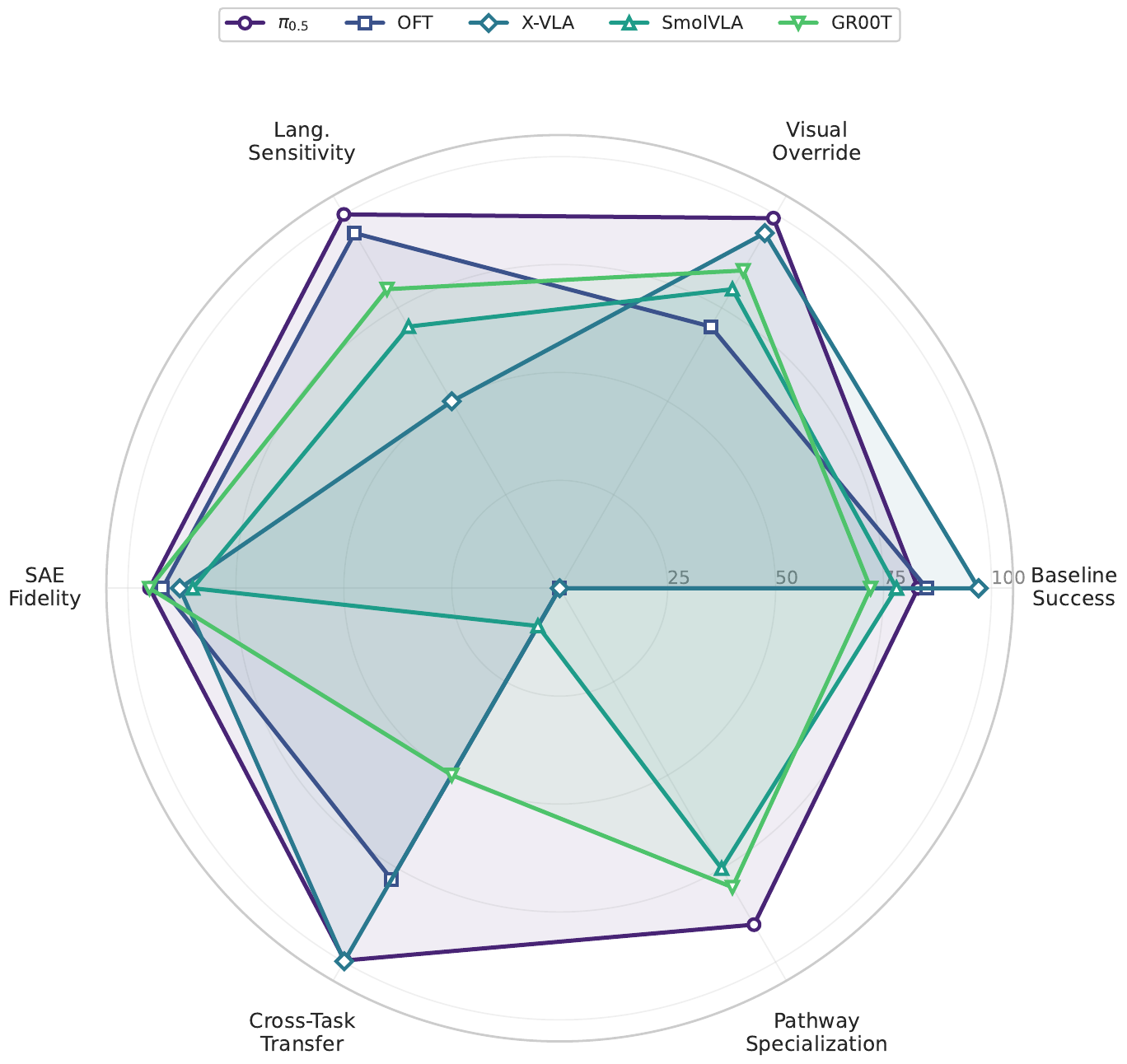

Cross-model capability radar. Five VLAs scored on baseline success, visual override strength, language sensitivity, SAE fidelity, cross-task transfer rate, and pathway specialization. OFT lacks pathway specialization (single-pathway architecture); SmolVLA and GR00T show the strongest pathway specialization alongside Pi0.5.

4 suites, 40 tasks

MuJoCo tabletop

10 tasks, 2 embodiments

WidowX + Google Robot

Bimanual tasks

TransferCube, Insertion

50 manipulation tasks

Multi-task evaluation

| Model | Episodes | SAEs Trained | Concepts ID'd | Benchmark(s) |

|---|---|---|---|---|

| Pi0.5 | 65,000+ | 36 | 43 | LIBERO |

| OpenVLA-OFT | 70,700+ | 32 | 45 | LIBERO |

| X-VLA | 80,000+ | 96 | 82 | LIBERO, SimplerEnv |

| SmolVLA | 42,000+ | 192 | 45 | LIBERO, MetaWorld |

| GR00T N1.5 | 164,700+ | 68 | 36 | LIBERO |

| ACT | 1,870 | - | - | ALOHA |

| Total | 424,000+ | 424 | 82+ | 4 benchmarks |

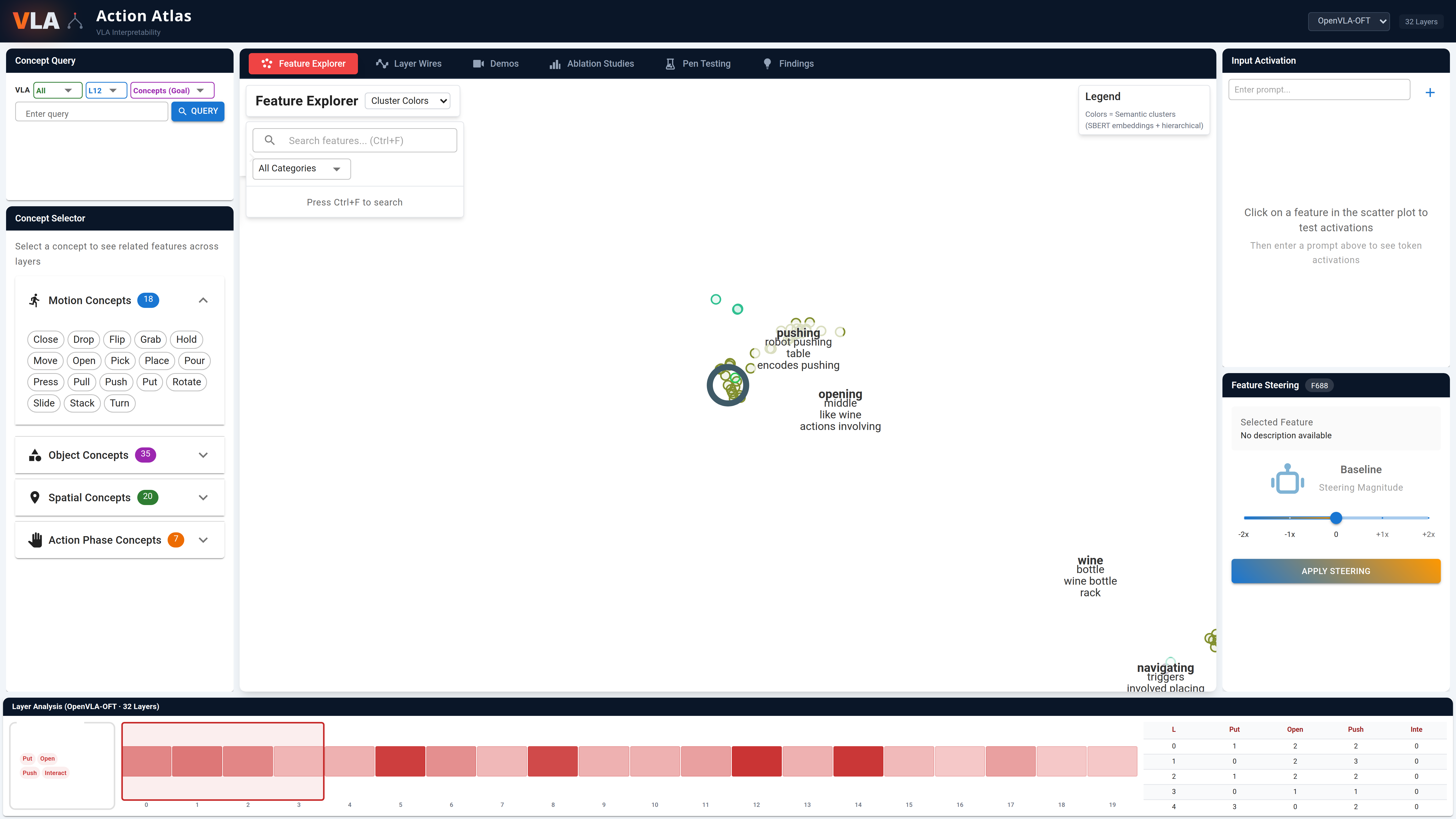

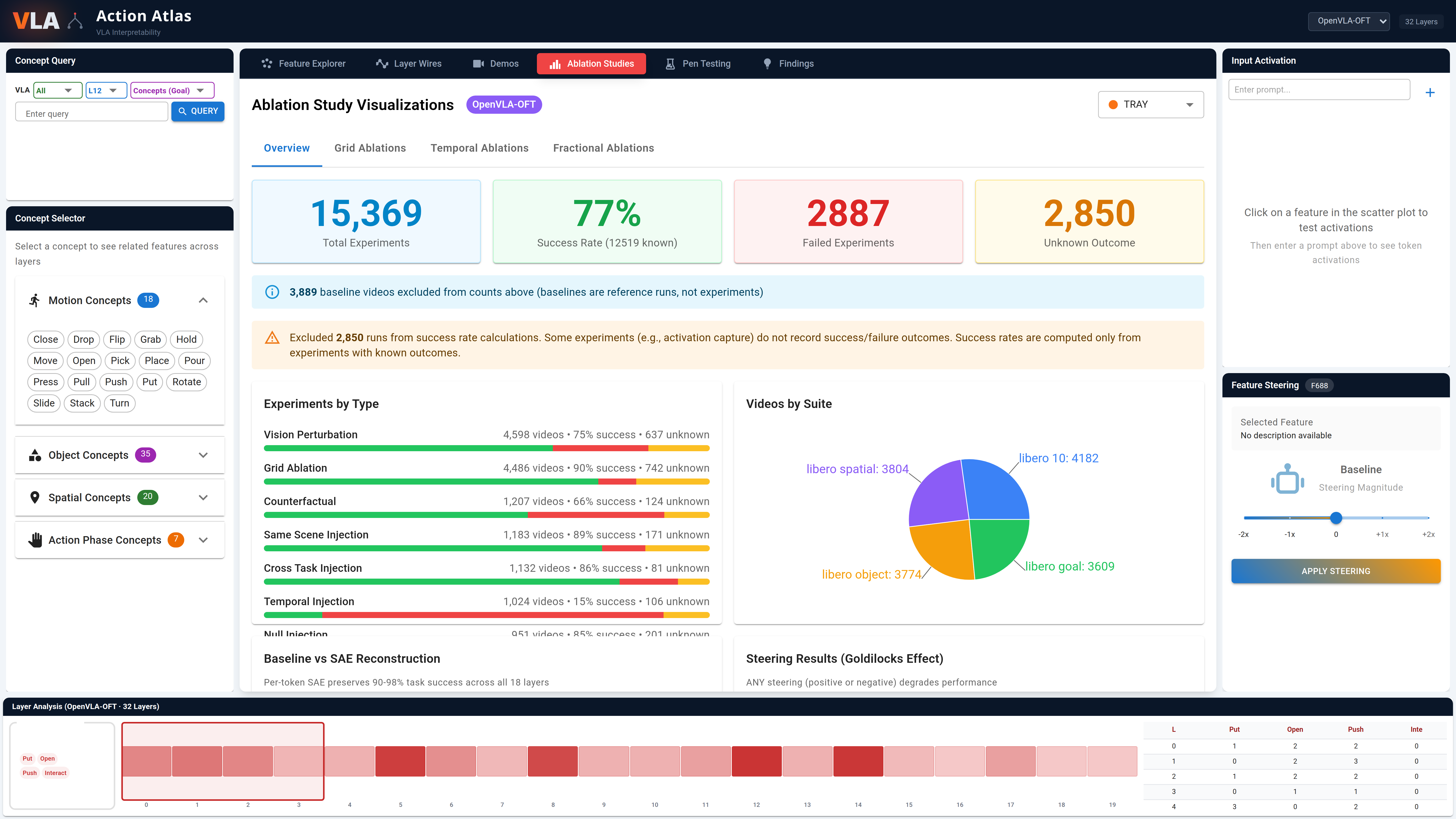

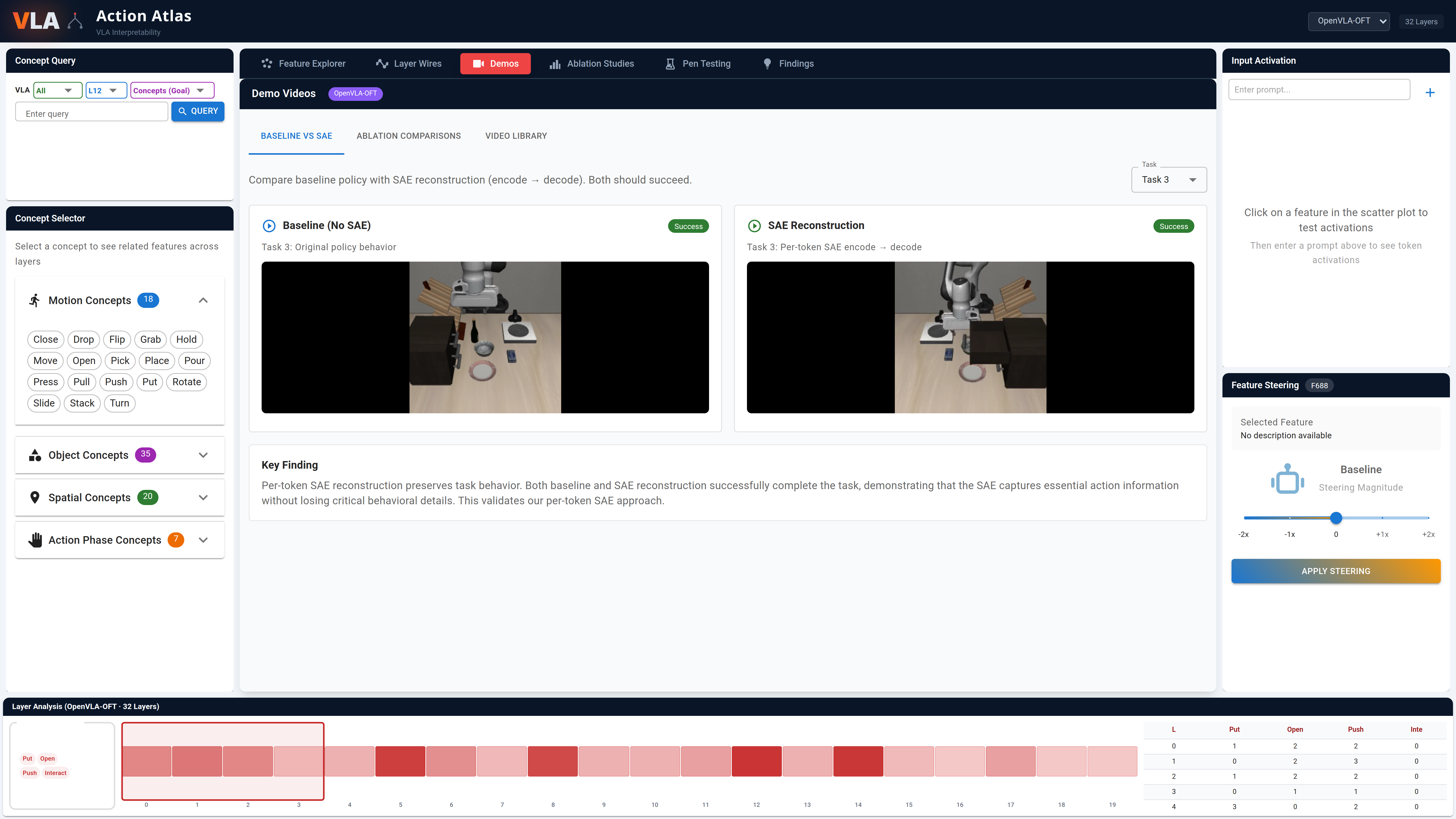

Interactive visualization platform for VLA interpretability, inspired by Neuronpedia

UMAP scatter plots of 4,096+ SAE features with semantic search via SBERT embeddings

Architecture diagrams showing information flow and concept density across transformer layers

308,000+ rollout videos filterable by model, suite, experiment type, and outcome

Side-by-side baseline vs. ablated behavior with success comparison

Vision perturbation results across models with displacement analysis and cross-embodiment data

action-atlas.com

Feature Explorer

Layer Circuits

Ablation Studies

Demo Videos

@misc{grant2026featurescreatedequalmechanistic,

title = {Not All Features Are Created Equal: A Mechanistic

Study of Vision-Language-Action Models},

author = {Bryce Grant and Xijia Zhao and Peng Wang},

year = {2026},

eprint = {2603.19233},

archivePrefix = {arXiv},

primaryClass = {cs.RO},

url = {https://arxiv.org/abs/2603.19233}

}